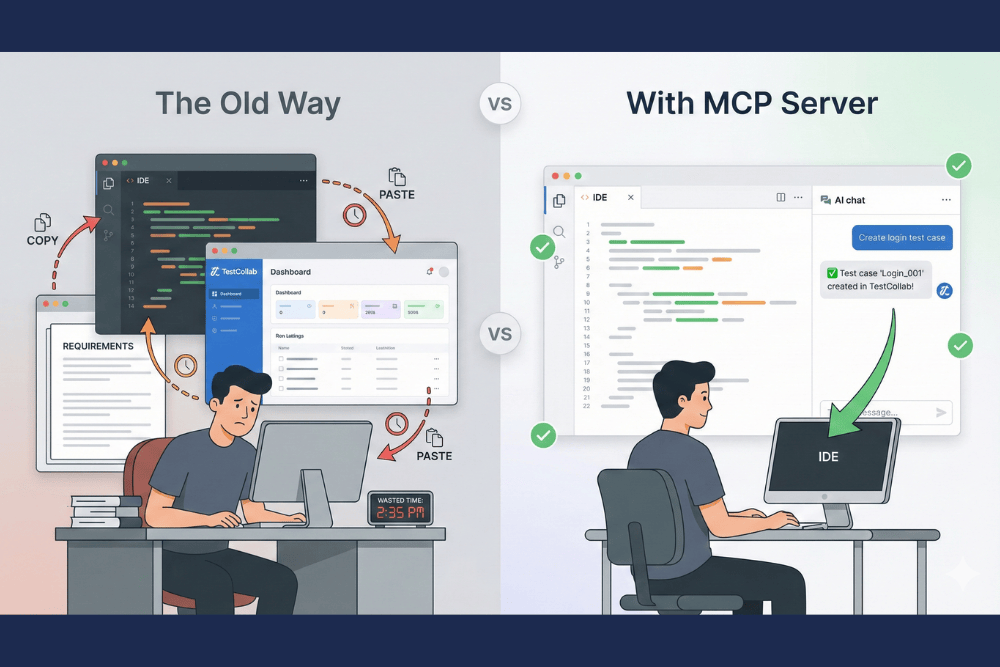

When we launched the TestCollab MCP Server, it supported three operations: list, create, and update test cases. That was enough to prove the concept - AI assistants could generate test cases while you code. But the feedback from early users was clear: they wanted to do more without leaving their editor.

Today, the MCP server supports 17 AI testing tools across test cases, test suites, and test plans. Here's what's new and the example prompts that make it useful.

Organize tests into suites

Test suites give your test cases structure. Previously, you could assign a test case to a suite, but you couldn't create or reorganize suites themselves. Now you can manage your entire suite hierarchy from your AI assistant.

Prompts you can try:

- "Create a new suite called 'Payment Processing' under the 'E-commerce' suite"

- "Show me the full suite tree for this project"

- "Move the 'Legacy Auth' suite under 'Authentication'"

- "Reorder the suites under 'API Tests' so 'Users' comes before 'Orders'"

- "Delete the empty 'Temp' suite"

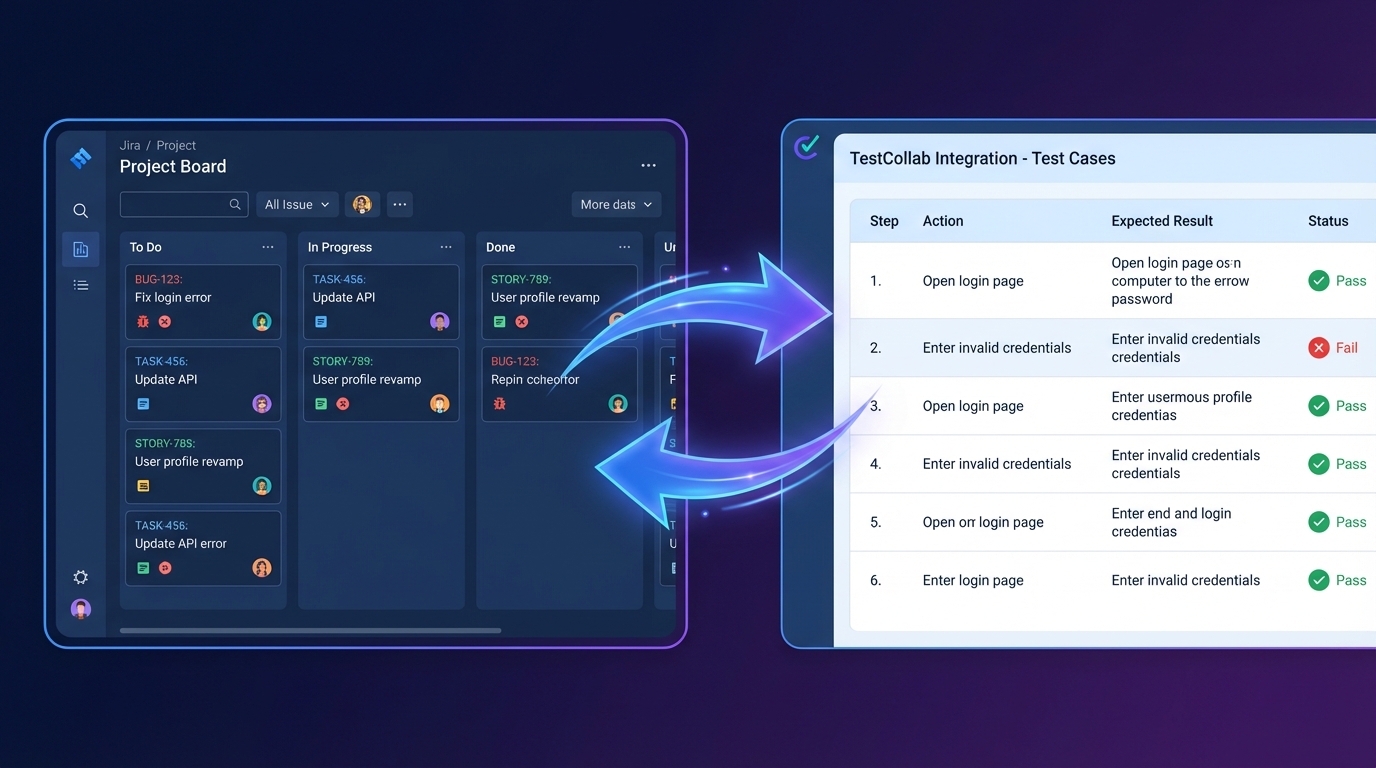

Create and manage test plans

Test plans are how you organize test execution - which test cases to run, in what configurations, assigned to which testers. Managing these from an AI assistant means you can spin up a test plan as part of your development conversation.

Prompts you can try:

- "Create a regression test plan with all test cases tagged 'smoke'"

- "List all test plans created this month"

- "Show me the progress on test plan 'Sprint 42 Regression'"

- "Create a test plan called 'Login Refactor' with all test cases from the 'Authentication' suite, configured for Chrome and Firefox"

- "Archive the 'v2.1 Release' test plan"

AI test case generation in practice

Here's what a typical session looks like with the expanded MCP tools. You're working on a new checkout flow:

Four prompts, and you've gone from zero to a structured, plannable set of tests.

Full codebase test generation (YOLO mode)

Feeling brave? Enable auto-accept mode in your AI client — Claude Code's --dangerously-skip-permissions, Cursor's YOLO mode, etc. — point it at your entire codebase, and walk away. Go grab a coffee. Maybe two.

"Scan this entire codebase. Create suites mirroring the module structure and generate test cases with detailed steps for every feature you find."

The AI will read your source files, routes, controllers, and models, create a suite hierarchy matching your code structure, and generate test cases with steps for every feature it discovers. No prompting between steps. It just... keeps going.

What to expect when you get back:

| Codebase size | Approximate time | Output |

|---|---|---|

| Small (< 20 files) | ~5 minutes | 20-40 test cases across 5-10 suites |

| Medium (~50 files) | ~20-30 minutes | 50-100+ test cases across 15-25 suites |

| Large (100+ files) | ~45-60 minutes | 150+ test cases across 30+ suites |

Want a complete hands-on walkthrough? Our step-by-step tutorial on automated test case generation with Claude Code takes you from setup to a fully populated test suite with real examples.

Suite structures and test case titles are generally solid — the AI infers these well from code structure and naming. But test steps for UI flows can get... creative. The AI hasn't actually seen your UI, so it's doing its best impression of someone who read the docs but never opened the app. Review before you run.

For best results, give your TestCollab project a detailed project description — specify your application type, tech stack, and key user roles. The more context you provide upfront, the less fiction you'll find in the output.

MCP server setup

The MCP server no longer requires a hosted endpoint. It runs locally via npx with environment variable authentication - no HTTP headers, no remote server dependency.

{

"mcpServers": {

"testcollab": {

"command": "npx",

"args": ["-y", "@testcollab/mcp-server"],

"env": {

"TC_API_TOKEN": "your-api-token",

"TC_API_URL": "https://api.testcollab.io",

"TC_DEFAULT_PROJECT": "16"

}

}

}

}This works with Claude Code, Claude Desktop, Cursor, Windsurf, Codex CLI, and any other MCP-compatible client. See the setup guide for client-specific configuration.

All 17 MCP tools at a glance

| Area | Tools |

|---|---|

| Test cases | list, get, create, update |

| Test suites | list, get, create, update, delete, move, reorder |

| Test plans | list, get, create, update, delete |

| Project context | get project context (suites, tags, users, custom fields) |

More example prompts for AI testing

Beyond the basics, here are prompts that show the depth of what's possible:

- "What test cases exist for the password reset feature?"

- "Update test case 1502 - the expected status code changed from 200 to 201"

- "Create a test case for the new /api/users endpoint with steps for GET, POST, and DELETE"

- "List all test plans with status 'active'"

- "Show me test cases tagged 'flaky' with priority high"

- "Create a suite structure: API Tests > Users, Orders, Products"

What's next

The MCP server is open source on GitHub and available as an npm package. We're actively working on test execution recording and deeper CI/CD integration.

Try it out, and let us know what workflows you build. Visit the MCP Server integration page for the full setup guide and capability reference.