In late 2025, OpenAI published something that made us rethink what QA engineering looks like in an agent-first world. Their engineering team built and shipped a product with roughly a million lines of code - and zero lines were written by a human. Every line - application logic, tests, CI configuration, documentation - was generated by Codex agents. Humans designed the systems. Agents did the work.

They called the discipline behind this harness engineering: the practice of designing environments, scaffolding, and feedback loops that enable AI agents to do reliable software work autonomously.

This is not a hypothetical future. It is happening now. And for QA teams, it changes everything about where quality comes from and who is responsible for it.

From Writing Code to Designing Environments

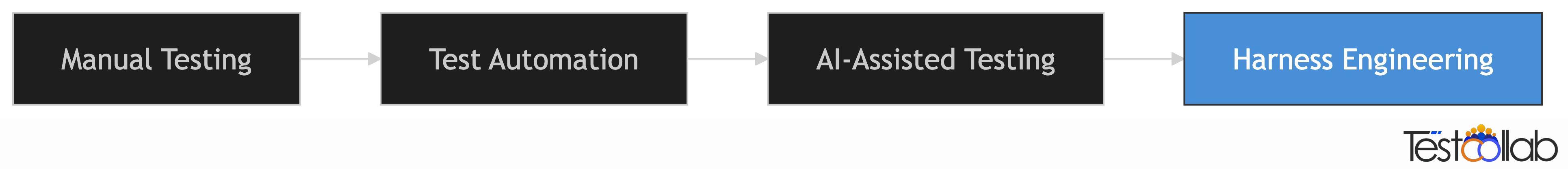

QA has been on a long arc of abstraction. Manual testing gave way to test automation. Automation gave way to AI-assisted testing. Each shift moved testers further from executing individual checks and closer to designing systems that do the checking for them.

Harness engineering is the next step on that arc.

In a harness-engineered workflow, the engineer's job is not to write code or even write tests. It is to build the environment - the guardrails, the feedback loops, the documentation, the tooling - that allows an agent to write, test, and ship code reliably.

For QA professionals, this means the most valuable skill is no longer writing test scripts. It is designing the systems that make quality reproducible, enforceable, and scalable - whether the code is written by a human or an agent.

The QA Bottleneck OpenAI Hit

Here is the part that should resonate with every QA leader: as OpenAI's agent throughput increased, their bottleneck became human QA capacity.

Sound familiar? Every team that has scaled engineering output has hit this wall. More features ship faster, but QA review cannot keep up. The typical response is to hire more testers or cut corners on coverage. Neither works long-term.

OpenAI took a different approach. They made the application itself legible to agents:

- Bootable per worktree - each agent gets its own isolated instance of the app to test against

- Chrome DevTools Protocol wired into agent runtime - agents can take screenshots, inspect DOM, and navigate the UI

- Logs and metrics exposed via local observability stack - agents query logs with LogQL and metrics with PromQL

- Ephemeral environments - each agent's test environment gets torn down after the task completes

The lesson for QA teams: if your test environment is not legible to automated tools and AI agents, it will become your bottleneck. Investing in ai in software testing infrastructure is not optional anymore - it is the critical path.

Repository Knowledge as the System of Record

One of OpenAI's earliest lessons was that dumping everything into one giant instruction file does not work. Context is a scarce resource. When everything is marked as important, nothing is.

They tried the "one big AGENTS.md" approach and it failed. The file became stale, agents could not tell what was still true, and it was impossible to verify mechanically.

Instead, they treated their instruction file as a table of contents - roughly 100 lines pointing to deeper, structured sources of truth elsewhere. Design docs, architecture guides, execution plans, and quality scores all lived in a structured docs directory and were versioned alongside the code.

This maps directly to QA. Think about where your quality standards live today:

- Are acceptance criteria in Slack threads that disappear after 90 days?

- Are test strategies in someone's head?

- Are regression suites documented in a spreadsheet that nobody updates?

Test cases and plans need to live in a shared, transparent system - visible to every team member, not buried in individual documents. Tools like TestCollab give the whole team visibility into what is being tested and why, with BDD integrations that bridge the gap between repository-level specs and structured test management.

Enforcing Quality Through Guardrails, Not Reviews

OpenAI's codebase is kept coherent not through code reviews but through mechanical enforcement. Custom linters check naming conventions, file size limits, structured logging, and architectural boundaries. Dependency directions between layers are validated by structural tests. When a lint fails, the error message itself contains remediation instructions that the agent can act on.

The key insight: once a quality rule is encoded, it applies everywhere at once. No reviewer fatigue. No "we usually do it this way but sometimes we don't." No tribal knowledge.

For QA teams, this is the shift-left philosophy taken to its logical conclusion. Instead of catching problems in review or testing, you make entire categories of problems structurally impossible:

- Boundary validation - data shapes are parsed at system boundaries, not trusted implicitly

- Architectural constraints - code can only depend in approved directions, enforced by linters

- Naming and structure conventions - enforced mechanically, not by memory

Garbage Collection: Fighting Entropy Continuously

Here is a detail from the OpenAI article that every QA team should internalize: their team used to spend every Friday - 20% of the engineering week - cleaning up what they called "AI slop." Agents replicate patterns that exist in the codebase, including uneven or suboptimal ones. Over time, this leads to drift.

Their solution was to encode "golden principles" into the codebase and build a recurring cleanup process. Background agents scan for deviations, update quality grades, and open targeted refactoring pull requests on a regular cadence.

They describe it as garbage collection: technical debt is a high-interest loan. It is almost always better to pay it down continuously in small increments than to let it compound.

The QA parallel is unmistakable:

- Flaky tests that nobody fixes slowly erode trust in the entire suite

- Stale test cases that reference deprecated features waste execution time and create noise

- Outdated documentation sends new team members (and agents) down wrong paths

Where QA Gets Involved

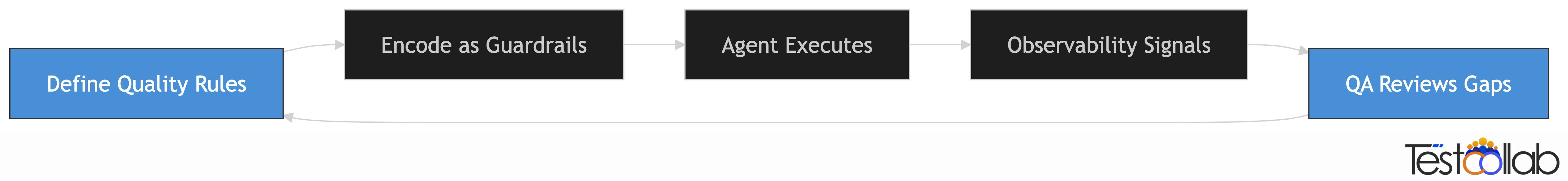

In a harness-engineered workflow, QA does not disappear. The role shifts from executing tests to designing the systems that make quality self-sustaining. Here is where QA professionals add the most leverage:

Defining acceptance criteria and quality gates. QA owns "what good looks like." These definitions get encoded into guardrails - linters, CI gates, architectural tests - that apply to every line of code, whether written by a human or an agent.

Designing agent-legible test environments. QA's expertise in test infrastructure now extends to making environments self-describing for AI agents. Can an agent boot your app in isolation? Can it query your logs? Can it take a screenshot and reason about what it sees?

Reviewing agent-generated tests for coverage gaps. Agents can write tests, but they optimize for what they can see. QA validates that test suites cover edge cases, business logic, and failure modes - not just happy paths.

Owning observability and feedback loops. QA monitors the quality signals - flake rates, coverage trends, regression patterns - and feeds them back into the system. This is the feedback loop that makes the whole system compound over time.

Entropy management. QA drives the cleanup cadence for test suites and quality documentation, ensuring that drift does not silently undermine the system.

The blue nodes in the cycle above - defining rules and reviewing gaps - are where human QA judgment is irreplaceable. Everything else can be increasingly automated.

Harness Engineering Maturity Model for QA Teams

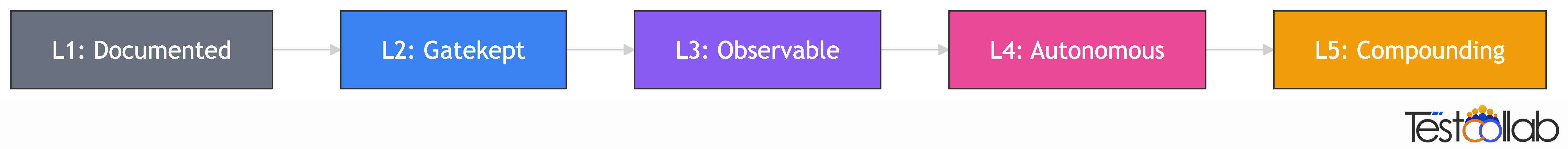

Not every team is ready to go full agent-first. That is fine. What matters is knowing where you are and what to focus on next. We have mapped the harness engineering principles into a five-level maturity model designed to give small teams a clear on-ramp and larger teams a north star.

Level 1: Documented (Foundation)

Who it is for: Small teams just getting started.

What it looks like: Quality standards have been moved from tribal knowledge into structured, shared systems. Basic CI runs your test suite. Test cases and plans live in a test management tool that is visible to the whole team - developers, QA, and product - not buried in individual documents or spreadsheets. Teams using BDD can sync specs to the repository through integrations that tools like TestCollab provide.

Key milestone: All quality rules are written down, versioned, and accessible to every team member.

How to get here:

- Audit your team's tribal knowledge. Find every quality standard that lives in Slack, in someone's head, or in a local document.

- Move quality standards into your repository as versioned documentation.

- Move test cases into a shared test management platform where the whole team has visibility.

- Set up basic CI that runs your test suite on every push.

Level 2: Gatekept (Automated)

Who it is for: Teams with CI/CD in place.

What it looks like: Your top quality rules are encoded as linters or CI gates - not just documented but enforced. Test environments are reproducible per branch. AI-powered tools like QA Copilot can run and analyze your test suite, turning CI from a pass/fail gate into an intelligent feedback loop.

Key milestone: A new contributor - human or agent - can run the full test suite in one command.

How to get here:

- Identify the top 5 quality rules your reviewers repeat most often. Encode them as automated checks.

- Make your test environment reproducible per branch - one command to spin up, one command to tear down.

- Integrate AI testing tools into your CI pipeline to get intelligent analysis, not just pass/fail results.

Level 3: Observable (Agent-Legible)

Who it is for: Teams exploring AI-assisted development.

What it looks like: Test environments are self-describing. Logs, metrics, and DOM are accessible to AI agents. Agents write first-draft tests. QA reviews for coverage gaps rather than writing every test from scratch.

Key milestone: An agent can reproduce a reported bug from a ticket description.

How to get here:

- Structure test documentation as progressive disclosure - an index pointing to deeper docs, not one monolithic test plan.

- Expose logs, metrics, and application state to agent tooling.

- Start using AI agents to generate first-draft test cases, then review and refine them.

Level 4: Autonomous (Agent-Driven)

Who it is for: Teams with mature agent tooling.

What it looks like: Agents handle bug-reproduce-fix-verify cycles end to end. QA focuses on acceptance criteria, edge cases, and quality scoring. Automated entropy cleanup runs on a regular cadence. Humans review by exception, not by default.

Key milestone: Agents open and merge fix PRs with QA-defined guardrails. Humans review by exception.

How to get here:

- Set up feedback loops with automated quality scoring - flake tracking, coverage drift alerts, regression pattern detection.

- Schedule recurring automated cleanup - weekly scans for stale tests, dead code, and outdated documentation.

- Define clear escalation criteria so agents know when to ask for human judgment.

Level 5: Compounding (Self-Healing)

Who it is for: The north star.

What it looks like: Full feedback loops. Agents detect regressions from observability signals, write fixes, validate them, and deploy. QA designs the system and rarely touches individual tests. Quality improves autonomously over time.

Key milestone: Mean time to fix regressions is measured in minutes, not days.

Important: Each level unlocks the next. You cannot skip to Level 4 without Level 2's automated gates in place. Agents without guardrails produce chaos. Start where you are, nail the key milestone, then move on.

Step-by-Step Framework: Applying Harness Engineering to Your QA Process

Here is a practical framework for applying harness engineering principles to your QA process, mapped to the maturity model above. Each step builds on the previous one.

Step 1: Audit Your Tribal Knowledge (Level 1)

Find every quality standard that lives outside your repository or test management system. Check Slack threads, wiki pages, onboarding documents, and the knowledge locked in senior team members' heads. Move it all into versioned, discoverable systems - quality standards into your repo, test cases into a shared test management platform.

Step 2: Encode Your Top 5 Quality Rules (Level 2)

Identify the rules your reviewers repeat most often. "Always validate input at the boundary." "Never commit secrets." "Every API endpoint needs an integration test." Turn these into automated checks - linters, CI gates, or structural tests. Once encoded, they apply everywhere at once.

Step 3: Make Test Environments Reproducible (Level 2)

Your test environment should spin up with one command and tear down with another. It should be reproducible per branch so that parallel work does not interfere. If setting up your test environment requires a wiki page with 15 steps and two people who know the tricks, you are not ready for Level 3.

Step 4: Structure Test Documentation as Progressive Disclosure (Level 3)

Replace monolithic test plans with structured, layered documentation. An index file points to deeper docs by domain. Each domain doc references specific test suites and acceptance criteria. Agents (and new team members) start with a small, stable entry point and follow pointers to what they need - rather than being overwhelmed by everything at once.

Step 5: Make Your Environment Agent-Legible (Level 3)

Expose your application's logs, metrics, and UI state to agent tooling. Can an AI agent boot your app, navigate to a specific page, and verify that a button does what it should? Can it query your error logs to find the root cause of a failure? If not, invest here before trying to automate more of the QA loop.

Step 6: Set Up Feedback Loops and Quality Scoring (Level 4)

Track quality signals systematically: flake rates, coverage trends, regression frequency, time-to-fix. Grade each product domain and architectural layer. When a quality score drops, the system should surface it automatically - not wait for a human to notice during a quarterly review.

Step 7: Schedule Recurring Automated Cleanup (Level 4)

Set up automated scans that run on a regular cadence - weekly or daily - to detect stale tests, dead code, outdated documentation, and pattern drift. Open targeted cleanup PRs that can be reviewed in under a minute. Remember the principle: tech debt is a high-interest loan. Small, continuous payments beat big painful bursts.

Step 8: Expand Agent Autonomy Gradually (Level 5)

Start with agents writing unit tests. Move to integration tests. Then to full bug-reproduce-fix-verify cycles. At each step, validate that your guardrails hold and your quality signals remain stable. Autonomy without observability is recklessness. Autonomy with strong feedback loops is how quality compounds.

Start Where You Are

Harness engineering is not just for teams at OpenAI's scale building with cutting-edge AI agents. The principles - make quality rules explicit, encode them as guardrails, build feedback loops, fight entropy continuously - apply to every QA team, at every level of maturity.

You do not need to go full agent-first tomorrow. Start at Level 1. Write down your quality standards. Make your test cases visible to the whole team. That alone will compound.

The teams that invest in harness engineering now - even at the foundation level - will be the ones ready when AI agents become standard participants in the software development lifecycle. And that future is closer than most people think.

Get started with TestCollab and take the first step toward making your QA process agent-ready.