"What jobs will AI replace?" It is one of the most searched questions on Google right now. And if you are a QA tester, you have probably typed some version of it yourself. Maybe late at night, after reading another headline about an AI agent that can "test your entire app autonomously."

We get it. The anxiety is real.

But here is what we have learned after years of building AI-powered testing tools and watching the space evolve up close: AI will not replace QA testers. It will, however, change what QA looks like. And that distinction matters more than any clickbait headline.

And companies that think they can slash their QA teams and let AI handle the rest? They are not innovating - they are just cutting. You cannot cut your way to market leadership. The companies winning with AI are the ones using it to do more testing, not employ fewer testers.

AI in QA Testing Is Not New - We Have Been Here Before

Before the current wave of generative AI, software testing already had a long history with automation. Selenium launched in 2004. Appium followed. Record-and-replay tools promised to eliminate manual testing entirely.

None of them replaced testers. They just moved the goalposts.

What is different now is the pace of change. In the last two years, large language models like GPT-4 and Claude have introduced capabilities that genuinely surprised even the skeptics - natural language understanding, code generation, and reasoning that can parse requirements docs and suggest test scenarios without being explicitly programmed.

The technology has improved more in 24 months than it did in the previous decade. That is undeniable. But "improved a lot" and "ready to replace humans" are two very different things.

Where AI in Software Testing Actually Stands Today

Let us be blunt about the current state of AI in software testing. Out of the box, most AI testing solutions are still early-stage. The demos are impressive. The production reality is messier.

Here is what AI does well right now:

- Repeatable scenarios. If you give AI a well-defined happy path - login, add to cart, checkout - it can generate and execute those tests reliably.

- Regression suites. AI excels at running the same checks over and over, flagging when something breaks against a known baseline.

- Test data generation. Need 500 variations of valid and invalid email addresses? AI can produce them in seconds.

- Boilerplate test cases. For standard CRUD operations and form validations, AI-generated tests are often good enough to use directly.

- Creative and exploratory testing. The kind of "what happens if I do this weird thing?" instinct that experienced testers develop over years - AI does not have that.

- Edge cases it has never seen. AI is pattern-matching at its core. Novel failure modes, unusual user workflows, and environment-specific quirks still catch it off guard.

- Contextual judgment. Understanding that a 200ms delay on a checkout page is a critical issue but the same delay on an internal admin panel is fine - that requires business context AI does not possess.

Agentic QA - The Promise and the Gap

The buzzword of 2025 is "agentic QA" - autonomous AI agents that can plan a test strategy, write the tests, execute them, interpret the results, and even file bugs. The vision is compelling. You describe your feature, and the agent handles everything.

In practice? It is not there yet.

We have tested multiple agentic QA approaches extensively. The honest assessment is that they work reasonably well in controlled environments with simple apps. But throw them at a complex enterprise application with role-based access, multi-step workflows, and dozens of integrations, and they become fragile. They need constant human guardrails. They cannot replace domain knowledge - the understanding that this particular customer segment uses the app in a way nobody designed for.

Agentic QA has huge promise. The trajectory is clear and exciting. But if someone tells you it is production-ready for most real-world teams today, they are selling you something.

The Breakthrough: AI as a Brainstorming Partner

Here is what we have found actually works - and this is the insight that should reassure every QA tester reading this.

AI produces dramatically better testing results when you treat it as a brainstorming partner rather than an autonomous replacement. The key is structured collaboration.

Instead of telling AI "test this feature," try this approach:

When you give AI a framework to operate within, the output quality jumps significantly. A vague prompt like "test the login page" produces generic results. A structured prompt like "brainstorm edge cases for a login page that supports SSO, MFA, and password-based auth across three user roles" produces test scenarios that are genuinely useful.

This is exactly the approach we built into TestCollab's QA Copilot - AI that augments your testing strategy rather than trying to replace it.

What QA Testers Should Actually Be Worried About (And What They Should Not)

Let us be honest about which parts of QA are at risk and which are not.

Roles That Will Shrink

If your entire job is running the same manual regression tests every sprint with no critical thinking involved, that work is going to be automated. Not because AI is brilliant, but because that work is repetitive enough for AI to handle adequately. This was true before generative AI - Selenium and Cypress were already eating into this space. AI just accelerates it.

Roles That Are Safe (and Growing)

Exploratory testing. The ability to poke at an application with creative intent, follow hunches, and discover bugs that no test plan anticipated. This requires human intuition that AI fundamentally lacks.

Test strategy and architecture. Deciding what to test, how much to test, and where the risk lives. This is a judgment call that requires understanding the business, the users, and the codebase. AI can inform this decision, but it cannot make it.

High-fidelity UX and visual testing. This is one area people overlook. Screen glitches, rendering artifacts, layout shifts, animation jank, subtle color mismatches, font rendering issues - AI cannot perceive these in real-time the way a human tester can. You notice when a modal flickers for a frame before settling. You notice when a button "feels" sluggish even though it technically responds within the acceptable threshold. You notice when a shadow is slightly off or text overlaps on one specific viewport width. These are high-fidelity visual and experiential issues that require human perception. And as applications get more visually sophisticated, this kind of testing becomes more valuable, not less.

User empathy and the "does this feel right?" test. A checkout flow can pass every functional test and still feel confusing to actual users. Testers who can think like users - who can evaluate the experience, not just the functionality - are irreplaceable.

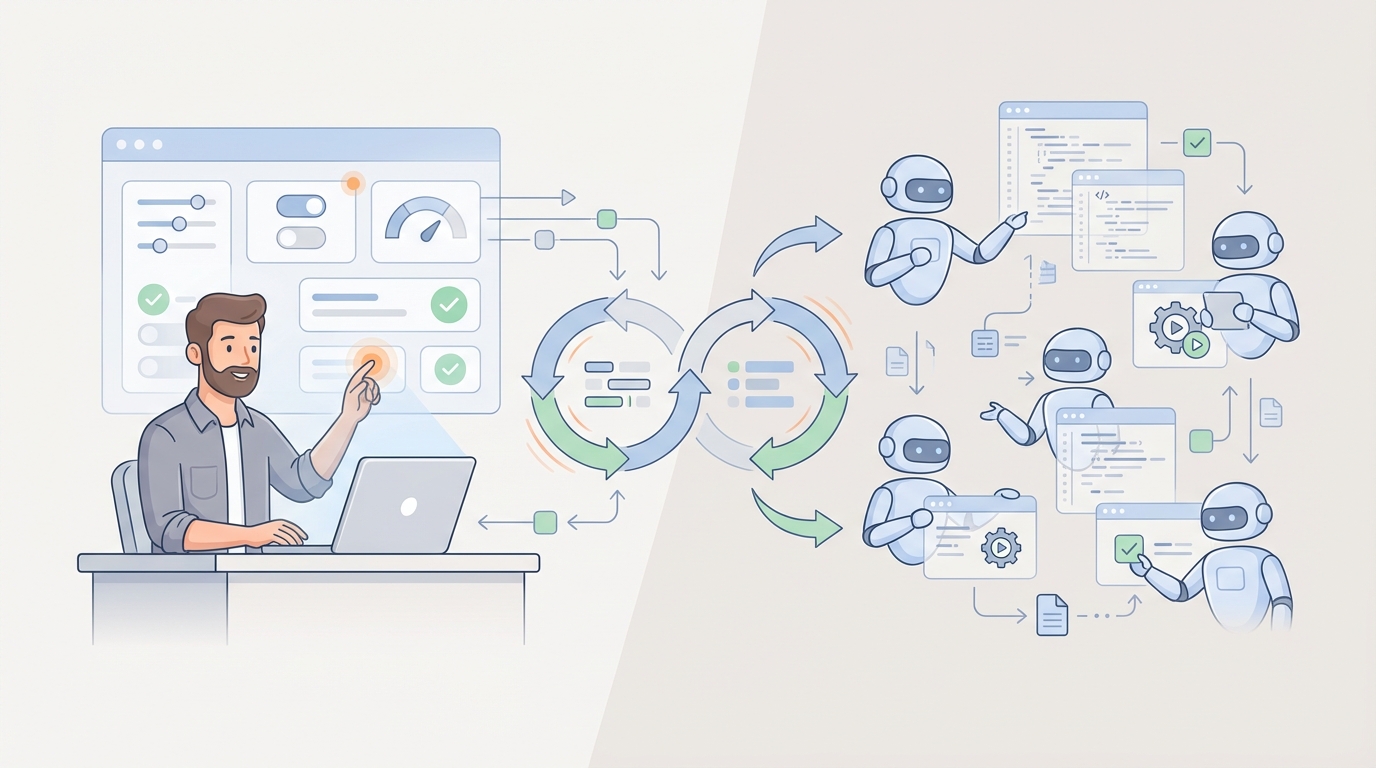

The New QA Skillset

The testers who thrive will be the ones who learn to work with AI effectively. That means:

- Writing structured prompts that produce useful test scenarios

- Orchestrating AI testing tools as part of a broader test strategy

- Designing test architectures that leverage AI for coverage while reserving human effort for judgment

- Understanding where AI is reliable and where it needs human oversight

Think of it this way: AI raises the floor for QA. Every team gets access to decent baseline testing. But the ceiling - the quality of testing that separates good products from great ones - that still depends on skilled humans.

The Future of Software Testing - What Comes Next

Here is our honest prediction for where this is heading.

AI will handle the grunt work. The repetitive regression tests, the boilerplate validations, the data generation, the initial test case brainstorming. This frees up human testers to focus on the work that actually requires expertise.

QA testers will evolve into QA engineers who orchestrate AI agents. You will spend less time writing "verify that the login button is visible" and more time designing test strategies, evaluating AI-generated test plans, and doing the creative exploratory work that finds the bugs that matter.

Testing coverage will go up, not headcount down. There is actually a well-known economic principle that explains why. In 1865, economist William Stanley Jevons observed that when steam engines became more fuel-efficient, coal consumption did not drop - it skyrocketed. Cheaper energy meant more uses for energy, which meant more total demand. This is called the Jevons Paradox, and it applies directly to AI in QA. When AI makes testing faster and cheaper, companies do not test less - they test more. They cover the edge cases they used to skip. They add performance testing they never had budget for. They run exploratory sessions they could not staff before. Most teams today are dramatically under-testing their software. AI does not eliminate the need - it reveals how much unmet demand there always was.

Companies that embrace the AI-plus-human approach will ship faster and with higher quality than those that try to go all-in on either AI or manual testing alone.

Your Job Is Not Going Away - But It Is Changing

If you are a QA tester worried about AI taking your job, here is the bottom line: the testers who adapt will become more valuable, not less. The ones who learn to leverage AI as a force multiplier - using it to brainstorm, to handle repetitive work, to expand coverage - will find themselves doing more interesting work with a bigger impact.

The testers who refuse to engage with AI at all? They will struggle. Not because AI replaced them, but because their peers who use AI will simply be more productive.

So start experimenting. Try feeding your next feature spec to an AI and asking it to brainstorm test scenarios. Cluster them into happy paths and edge cases. See what it gets right and what it misses. That gap between AI output and reality - that is where your expertise lives. And it is not going anywhere.

Ready to see how AI can augment your testing workflow? Try TestCollab's QA Copilot and experience the future of software testing firsthand.