Something fundamental is shifting in how software gets built and tested. AI agents in software testing are no longer a distant idea - the shift is well underway.

Andrej Karpathy - former AI lead at Tesla, co-founder of OpenAI - recently open-sourced a project called autoresearch. The idea is deceptively simple: give an AI agent a small codebase, a clear objective, and let it run experiments autonomously overnight. The entire system is about 630 lines of code. The human writes instructions in a Markdown file. The agent iterates on the code.

As Karpathy put it:

"the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py)."

No complex orchestration. No massive framework. Just a tight loop where a minimal agent modifies code, evaluates the result, keeps what works, and discards what doesn't - roughly 100 experiments overnight on a single GPU.

This is a pattern we've been paying close attention to at TestCollab.

AI Agents for Software Testing - Why Now?

If an AI agent can autonomously run ML research experiments, the same loop applies to autonomous testing of software. Imagine an agent that can:

- Look at your application, identify areas lacking test coverage, and write new test cases

- Run a test suite, analyze failures, and propose fixes - without waiting for a human to triage

- Learn from past test runs to prioritize what matters and skip what doesn't

From Copilot to Autonomous Testing Agent

Today, QA Copilot helps you generate test cases from plain English and convert manual tests to automated scripts. It's already saving teams significant time by eliminating the tedium of writing repetitive test scripts.

But copilots assist. Agents act.

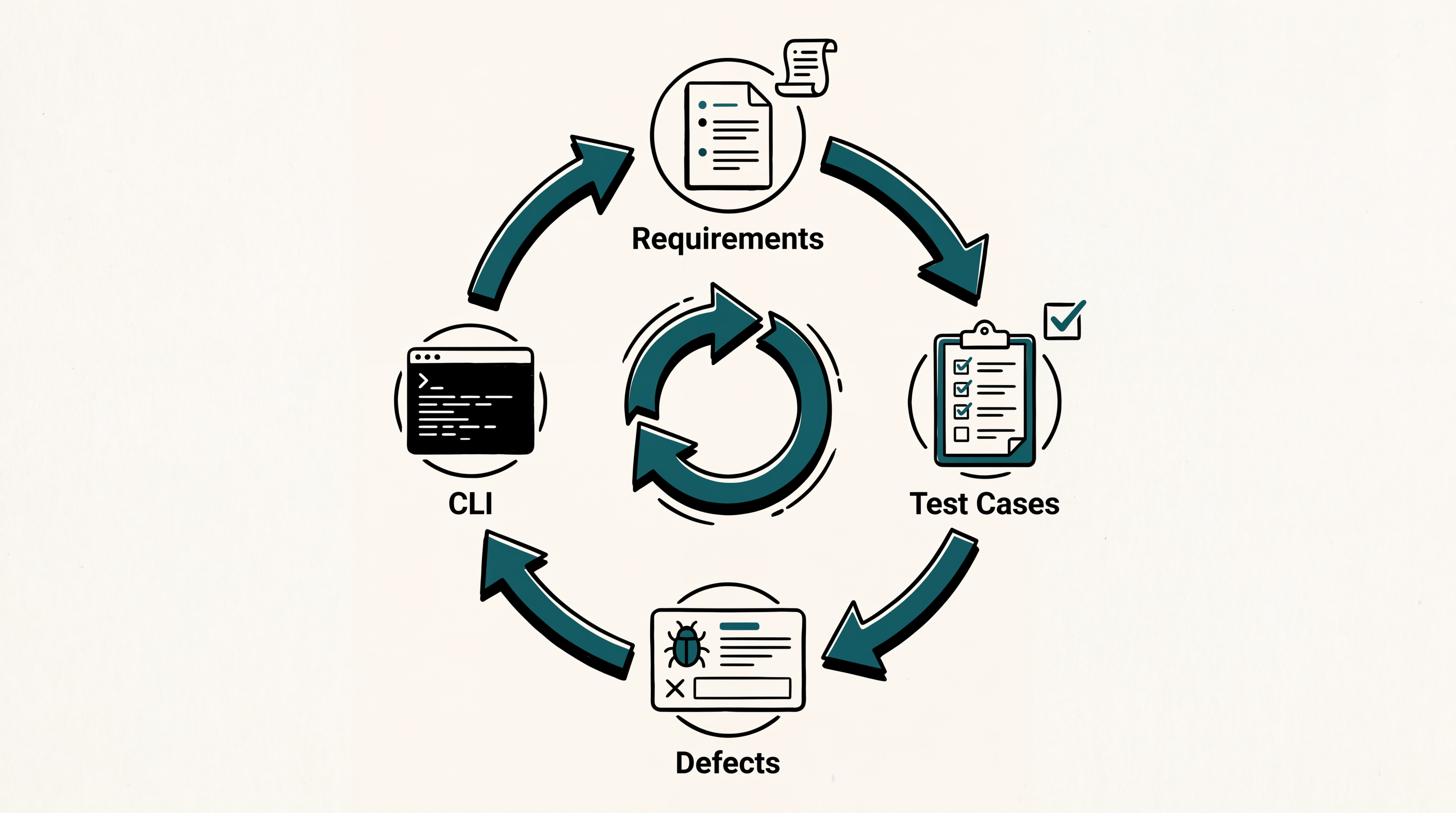

The next evolution of QA Copilot will introduce autonomous agents across three key areas:

- Authoring - Agents that draft test cases, generate scenarios from requirements, and keep your test suite comprehensive without manual effort

- Planning and automation - Agents that decide what to test, in what order, and execute the runs - optimizing for coverage and speed

- Impact analysis - Agents that watch your code changes and tell you exactly which tests are affected, what's at risk, and what new coverage is needed

Build Your Own AI QA Agents

We're not just building agents for you - we're building a platform where you can build your own.

Following the philosophy that the best AI agents are minimal and focused, TestCollab will let teams define custom agents using simple, declarative configurations:

- Deployment smoke testing - An agent that monitors your staging environment and runs smoke tests every time a deployment lands

- PR test coverage - An agent that reviews new pull requests and suggests test scenarios for changed code

- Regression watchdog - An agent that continuously runs regression suites and alerts you the moment something breaks

What's Next

We'll be sharing more details in the coming weeks - early access, agent templates, and deeper integration with your existing CI/CD pipelines. If you're already using QA Copilot, you're in a great position to be among the first to try autonomous testing agents.

The first piece of this is already shipping. See our hands-on walkthrough of AI in software testing with QA agents — humans curate a TestCollab test plan, and an agent (Hermes, Claude Code, or Codex) drives a real browser to execute it.

The era of AI agents that can reason about your test suite, run experiments autonomously, and continuously improve your quality process is here. We're excited to bring it to TestCollab.

Be among the first to try autonomous AI agents for QA.